Best Practice & Heuristics

Note: This article focuses on the basic concept of Best Practice understood by most organisations and does not cover the Cynefin models of Best, Good, Emerging, and Novel Practice in their relevant domains – more on that another time!

Best Practices exist in business for a reason. When we need to do something optimally, over time or using prediction we can determine the best methodology that has the least cost/risk for the output. In a perfect world, we want something that works first time, every time. This is the level many people in business tend to work at (or ignore!).

However, real life has an unpleasant if invigorating habit of not always providing us with a clear application of Best Practice – a wonderful opportunity to learn which we might not appreciate, say, mid-disaster. Time constraints, political expedience, resource limitations or complexity can all get soundly in the way.

Best Practice can also be a misnomer. Oddly, the approved, documented, step-driven way of doing things correctly to ensure consistent results can sometimes actually be less effective than doing things another way – as long as you are cognizant of pitfalls and expected results (usually via a solid mixture of expertise and experience). This means we sometimes have to choose between the proven, approved methodology and the effective methodology to achieve a goal within constraints. Even further, sometimes Best Practice simply cannot be applied at all to resolve an issue.

What we see here then is a disparity between Best Practice, the approved and most optimal hypothesised or sterile-tested way to achieve a goal, and Heuristics, a more practical approach to problem solving or learning that has no guarantee of being optimal (or sometimes even rational) but will adequately reach an immediate goal in the real world.

Or:

Best Practices as the supported optimal hypothetical route to achieve a long-term goal

Heuristics as a rule-of-thumb route to practically adequately achieve an immediate goal

The ability to choose the correct one to solve a problem is as important as having either. Spending time applying the incorrect one can at best waste your time, and at worst make the problem far worse.

Demonstrating the difference

Let’s look at a relatively simple example of this. Because I’m from a technical background originally, I’ll use an IT example (which may seem complicated, but it is actually quite logical):

Moving data between storage arrays

Using a Data Protection solution Base sitting on Windows, System Independent Format data is held on simple drive letters, typically storage arrays, as primary storage. Caveats are that it is a live system accepting and sending data constantly as well as maintaining that data in storage, the data could be into the tens of TB, and the SIDF files for each task are exclusively locked during this process, requiring a task-priority-driven queue to manage. The strong preference is that critical backups are not interrupted if possible.

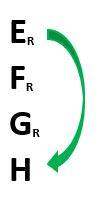

Let’s suppose you run three basic RAID 5 arrays, each one a volume, and you wish to replace E as it is older or unreliable hardware, so E is marked Read-Only so no new data can be written. Moving data between these is simple:

Using this particular solution, a command “Move to another Location” is used above. This tells the solution that E will be retired. Data is automatically redistributed to remaining drive letters, database indices are updated with the new locations for the data, and then E can be removed. This is simple, easy, obvious, and conforms readily to Best Practice.

So far, so good – but what if you wish to replace E with a new volume? (H being the obvious).

Here we can see that by marking all drives except H Read-Only, the data has only one location it can go to (unless you have a really crummy solution, it is unlikely you can also mark the last location Read-Only!). So here, you can see that this is quite simple, logical, and conforms readily to a Best Practice (follow these steps for the optimal outcome every time).

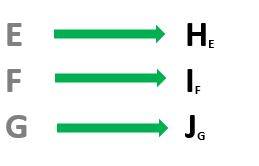

So now let’s look at what happens when the project increases in scope and complexity and assume the three volumes are split over a single RAID 5 array, for example, and you wish to replace the whole array for future-proofing/performance/storage space concerns in a like-for-like scenario (to maintain some simplicity):

Using the above method, you add the new three volumes (which must of course be at least as large and will typically be much larger). Here is where things cease being obvious and become complicated, although there is still a Best Practice. If you mark the originals Read-Only, and use the command above to move the data, the data will be moved by Best Practice, optimally, with updated indices in the database, with clear logs. No new data will collect on E, F, or G, and once the operation is complete they can be removed from the solution as storage locations. This is the approved, proven, supported, and repeatable methodology.

However!

I personally would not use this method, for a number of reasons; the main being “the real world”, which introduces complexity, illogic, and external factors a go-go. An alternative takes into account time constraints, monitoring and man-hours, Solution activity, simplicity, and effectiveness, as well as other unknown factors I cannot predict that could impact the process; the Heuristic approach defined practically on previous occasions and arrived at by testing in extremis. This is a work-around using different methods to achieve a more immediate goal:

In this example, I offline all Base services immediately after backups have ceased, so the Base is not running at all. I then copy through Windows, direct from volume->volume, E to H, F to I, G to J. On arrays, which run disks in parity, you can run multiple operations concurrently, so these are all done simultaneously. I then place a marker in each new volume (notepad document typically) saying “I used to be E!” I used to be F!” I used to be G!”

Then I unplug (leaving the original data intact!) the original arrays, rename the new volume letters to the original, and restart the services (i.e. turn it back on). And the Base says, yawn… where is my data on E, F- oh, there it is. Same indices, same locations… completely different hardware.

Let’s simplify the concept of what I have done here re the arrays:

(Disclaimer: I cannot guarantee there isn’t a stone ball waiting. That’s the problem with the real world. There could be.)

I find a lot of value in using real world examples to underpin my reasoning here:

A client had four Base machines of circa 12TB. He wished to upgrade the storage on each one from a 12TB array to a much newer, larger 48TB array. He asked my advice on moving this data. I ran him through the “Chris-approved” Heuristic method and the reasoning. He then got a second opinion from Support, who dictated the Best Practice method. He followed their advice. The copy of 12TB of data on local disk should be achievable within 8-12 hours, well within a backup window. The Base would never know what happened. Since tasks of a much higher priority were constantly running and interrupting (Replication, Backup, Restore, Optimisation, Expiration, etc), it instead took him almost 4 weeks! …he told me not to say “I told you so”.

What lies behind Best Practice and Heuristics usage

So – let’s move out from the “technical” aspect above and look a level deeper at the concepts behind the problems faced.

Best Practice here is the company line, using the tool built for the job, but actually the difference in efficiency and process is significant, although the end result is the same. Whether it is better to transplant wholly or create entirely new indices for the same data is debatable given the achievement, i.e. the replacement of the hardware. Which one was more effective in the real world is readily apparent.

Most training I have been on will teach only the first concepts, if you even get those; there is simply too much information overload in a short space of time. Training courses are not always particularly efficient, and are subject to the same choices between Best Practice and Heuristics as the subjects they cover. But in fact you can break it down even more simply than this. It’s generally accepted that you typically encounter three main types of problem (certainly in trainings) at varying levels:

- Simple problems (You need to move the data off a volume. You click move in a clearly explained wizard. It moves.)

Obvious causality; known parameters for problem and resolution; correct answers exist and will be achieved through logic; resolution can be achieved by anyone.

You know what it does, and how to achieve it.

- Complicated problems (You need to move the data. You can spread it to multiple locations or send it to one but this requires decisions, knowledge and scoping. You consider then configure parameters and click move. It moves.)

Causality isn’t immediately obvious; parameters may be known but not completely; there may be multiple correct answers; expertise is required to resolve them.

You know what it should do, and how to work to resolve it if it doesn’t.

- Complex problems (You need to move the data. You cannot complete this via the wizard in the time allotted, external factors may or will interfere, you are not aware of all factors and cannot anticipate everything. Expertise doesn’t resolve the fundamental issue. You have to experiment to find another method. You test. You find the best possible path given constraints and work around the base issues. You shut it all down, copy the volumes, turn it all back on. It’s moved. You check it worked!)

Causality is unknown; parameters are unknown; there are no absolute “correct” answers; logic doesn’t resolve it; expertise alone is ineffective; innovation and lateral thinking are required.

You know what it should do but not why it doesn’t or how to resolve it. You must test different methods to find an immediate resolution.

At this point we start moving from troubleshooting closer to the realms of Cynefin, Dave Snowden’s framework for decision making (which has another few areas a little less relevant to the core of this article). There is a wealth of incredible information here by Liz Keogh, a very talented Agile Consultant and keynote speaker who speaks and teaches on this subject globally; I strongly recommend looking at her blog.

With the above problems however it becomes obvious that Best Practice can be applied to the first, and at least GOOD Practice to the second, but neither to the last; Heuristics are required to resolve complexity because Best Practice simply cannot exist there. It is further worth noting that issues are not always one of these problem areas alone!

So how does a teacher best approach this with a class?

Applying this to teaching is an interesting conundrum then, as by nature teaching is at the same time simple, complicated and complex. Cause and effect exists for most subjects, with basic troubleshooting. But you are also teaching students to diagnose, and start down the path to expertise. The subject and the systems can usually be predicted. Yet you have a class full of individual people, and you cannot predict their actions or responses. Effectively balancing atmosphere, skill level, collaborative potential, action, understanding, interest – and what wonderful technical issues they may throw up to learn from! – requires a flexible and innovative approach to each class. It may be best to consider each training solution a unique problem with several concurrent paths to resolution, and balancing logical process and flexibility to deliver the optimal mix of learning.

Classes for me are an incredible mixture of these concepts; teaching people how to teach is a much-different prospect from simple subject knowledge transfer.

I have found over many years that, by and large, a class able to understand and apply Best Practice is also capable of also deciding when and if to apply Best Practice – or Heuristics – but won’t necessarily do so. It is very easy to fall into the ruts of teaching a class by rote, and subconsciously teaching them to follow rote themselves. Humans, adaptable as we are, prefer ease and comfort, and will often follow this detrimentally. This is the darker side of Best Practice; following a set of steps without thought or reactivity, trying something again and again because it should work, and anything else is effort. Heuristics are effort. Let’s apply the principle of Occam’s Razor to this, then:

If what you are doing doesn’t work… do something else!

Resolving problems

I often find that a problem in a sterile lab environment which is a complicated issue becomes a complex issue in the real world, simply because of unknown variables and environments. It is also why I am not in favour of unrealistic teaching environments, which may teach only the shape of the spoon (Chapter 7, Involve Me). If you teach for real-world usage and problem solving, you must make your teaching as real-world as possible or application is limited at best.

Best Practices can change and refine over time, and must be constantly updated, but for a simple or complicated scenario deliver a consistent result. Heuristics cannot be relied on for everything, and may not be optimal or concise, but they can be used to resolve a problem that does not conform to a Best Practise – in other words, a complex problem. When I’m teaching people how to teach, I encourage them to:

- Identify Best Practices

- Identify possible issues

- Be prepared to react Heuristically

- Identify if the issue is human-based or system-based

- Impart guidance on how to direct student to fix this themselves

- Learn from doing!

This requires both pro- and re- active responses. A planned approach is key, but the ability to react and absorb changes is also critical and sometimes missed. As mentioned above, it is all too easy to fall into the habit of continuing to follow set instructions, and I see this in class a lot. If an approach doesn’t work, often I see it repeated again, and then the student sits and frowns. This is one reason in fact I have a very flexible approach to any teaching and use little presentation or documentation for anything past conceptual or reference material – these can’t be changed on the fly and allow less lateral thinking and reactivity when rigidly followed, whether from class dynamics or technical difficulties (and so forth). Where Best Practice does not fulfil all criteria, Heuristics often can.

Humans can individually be wonderfully chaotic in approach, and you cannot as easily predict people as you can systems. We are where any complexity is usually introduced (in IT there are multiple terms – I say “Chair-to-Keyboard interface error”, but you also have PEBCAK, PICNIC, and the wonderful Eastern European “The device in front of the monitor has a problem”. They all mean “human error”.). What this amounts to is – people break stuff; often, illogically, and sometimes gleefully. Best Practice usually works for systems, but not for humans.

Or:

Systems usually follow rules; people usually don’t.

In war it is oft-quoted that “no plan survives contact with the enemy”. If you stick only to a plan despite changes in expected enemy deployment and composition, you are likely to find the battle does not turn out as you hoped. In extremis, you throw things at the wall and see what sticks. This is where agility of mindset and lateral thinking are critical, vital assets of both teachers and students.

A project is the same; a training course is the same. Teaching students flexibility of thought and logic of application is important, and constantly improves your own, and whilst Best Practices are key to this in many industries, we must all be mindful of surrounding situational modifiers. If it fails, or doesn’t fit the requirements, heuristically define what is definitely and immediately effective, use it, and qualify it logically with real-world examples of why this worked. This is my final key point for students in my classes:

Use the appropriate method at the point of decision.

Ultimately, you can only provide tools and capability to use them effectively: you can only open the door. Walking through is up to the students.